Dead Internet Theory

Overview

Something happened to the internet, and by 2025, you didn’t need to be a conspiracy theorist to feel it. You could feel it in the uncanny valley of your social media feed, in the product reviews that read like they were written by the same alien pretending to be human, in the Google search results that sent you to AI-generated content farms instead of actual answers. You could feel it every time you wondered whether the person you were arguing with online was even a person at all.

The Dead Internet Theory is a conspiracy theory and cultural critique alleging that the internet has been largely “dead” since approximately 2016-2017 — meaning that the majority of online content, interactions, and traffic is now generated by artificial intelligence, bots, and algorithmic systems rather than real human beings. The theory proposes that governments and corporations have systematically replaced organic human discourse with manufactured content designed to manipulate public opinion, shape consumer behavior, and maintain social control.

What makes this theory genuinely unusual — and what separates it from, say, flat earth claims or reptilian bloodline theories — is that reality keeps catching up to it. When IlluminatiPirate posted the theory on a niche forum in January 2021, it sounded paranoid. By 2025, Imperva’s annual bot report found that automated traffic had crossed the 50% threshold of all internet activity for the first time. Amazon was drowning in AI-written books. Google’s search results were so polluted with AI-generated SEO content that users were appending “reddit” to every query just to find something a human being actually wrote. The word “slop” — a term for the endless tide of AI-generated garbage — had entered the mainstream lexicon.

The theory occupies a rare position in the conspiracy landscape: a claim that started as fringe paranoia and has been progressively, measurably validated — at least in its observational core. The conspiratorial claim of deliberate orchestration by governments and intelligence agencies remains unproven. But the observation that the internet is increasingly dominated by non-human content? That stopped being a theory sometime around 2024 and became a documented fact.

Origins & History

The Agora Road Manifesto

The Dead Internet Theory was formally articulated in a post published on the Agora Road’s Macintosh Cafe forum in January 2021 by a user called “IlluminatiPirate.” The post, titled “Dead Internet Theory: Most of the Internet is Fake,” argued that the internet had been effectively “dead” since 2016 or 2017, with most content and interactions replaced by AI-generated material orchestrated by government agencies and corporations.

IlluminatiPirate’s post was long, rambling, and earnest — equal parts manifesto and cri de coeur. It synthesized observations that had been circulating in various online communities for years. The author pointed to the perceived homogenization of online culture, the disappearance of quirky personal websites and independent forums, and the eerie sense that social media interactions had become formulaic and repetitive, as if generated by the same underlying system.

The post specifically alleged that the U.S. government, working through intelligence agencies like the CIA and NSA, had deployed sophisticated AI systems to flood the internet with synthetic content, manufactured discussions, and artificial engagement. The purpose, according to IlluminatiPirate, was to manipulate public opinion, suppress organic dissent, and maintain social control through an illusion of consensus.

Pre-History: The Feeling That Something Was Off

But IlluminatiPirate didn’t invent these ideas out of nothing. The sense that the internet was becoming less human had been simmering for years.

As early as 2016-2017, users on 4chan’s /x/ (paranormal) board discussed the perceived “death” of internet culture — the disappearance of genuine weirdness, the rise of corporate-friendly content, and the sense that online discourse had become a performance optimized for algorithms rather than a genuine exchange between human beings. Reddit users noted the same phenomenon: comment threads that felt scripted, trending topics that felt manufactured, and an internet that seemed to be talking to itself.

These discussions reflected a broader cultural malaise. The internet of the late 2000s and early 2010s — messy, anarchic, full of personal blogs and bizarre GeoCities pages and forum arguments between actual humans — had given way to something else entirely. By 2017, the internet was five platforms: Facebook, Google, YouTube, Twitter, and Amazon. Everything else was a rounding error. The independent web had been absorbed, and what replaced it felt sterile, optimized, and vaguely artificial.

There was also a documented precedent that lent credibility to the paranoia. The Russia’s Internet Research Agency had been exposed running massive bot and troll operations targeting Western social media during the 2016 U.S. election. China’s “50 Cent Army” — legions of state-sponsored commenters paid to shape online discourse — was well-documented. If governments were already doing this at scale, the leap to “maybe they’re all doing it, and maybe most of what we see online is fake” wasn’t as large as it might seem.

Going Mainstream

The theory gained significant mainstream attention in August 2021 when The Atlantic published “Maybe You Missed It, but the Internet ‘Died’ Five Years Ago” by Kaitlyn Tiffany. The article treated the theory seriously as a cultural phenomenon, exploring why it resonated even if its conspiratorial claims were unsubstantiated. Subsequent coverage in The New York Times, Vice, Wired, and other major outlets brought the theory to a much broader audience.

Then ChatGPT happened.

OpenAI released ChatGPT on November 30, 2022, and the world changed. Within two months, the chatbot had over 100 million users. More importantly, it demonstrated that AI could generate human-sounding text at scale, cheaply, and with minimal human oversight. The implications for the Dead Internet Theory were immediately obvious, and people noticed: if anyone with a laptop could now generate unlimited human-sounding content, what would happen to the internet?

What happened was exactly what the theory predicted.

The 2023-2025 Explosion: When the Theory Became Reality

The Rise of AI Slop

By 2024, a new word had entered the internet lexicon: slop. Coined by analogy with spam, slop described the tidal wave of low-quality, AI-generated content flooding every corner of the web. Unlike traditional spam, which was usually obviously promotional, slop was insidious — it looked like real content, read like real content, and was designed to be indistinguishable from real content. It was just… worse. Blander. More generic. Missing the spark of actual human thought.

The term caught on because it described something everyone was experiencing simultaneously. Your Facebook feed was suddenly full of AI-generated images of Jesus holding shrimp, hyperrealistic photos of crying children asking for birthday wishes, and impossibly detailed fantasy landscapes — all posted by accounts that existed solely to farm engagement. Your Google search results returned pages of AI-written articles that rewrote the same information in slightly different words, none of which actually answered your question. The product review you were reading on Amazon sounded suspiciously like it was written by the same entity that wrote the product description.

Slop wasn’t a conspiracy. It was a business model.

Amazon: The AI Book and Review Apocalypse

Amazon became one of the most visible battlegrounds. By 2024, the Kindle marketplace was flooded with AI-generated books — often cobbled together by feeding prompts into ChatGPT and uploading the results with AI-generated covers. Some were obviously fake: cookbooks with recipes that didn’t work, travel guides for places the “author” had never visited, self-help books that were essentially rephrased Wikipedia articles. Others were more sophisticated, mimicking genuine authors’ styles closely enough to confuse buyers.

The problem wasn’t just the books themselves. AI-generated product reviews became epidemic across Amazon’s entire marketplace. Cybersecurity researchers found networks of thousands of bot accounts posting coordinated five-star reviews for products they’d never purchased, using language patterns clearly generated by large language models. Amazon reportedly removed hundreds of millions of suspected fake reviews annually, but the volume of new AI-generated reviews consistently outpaced removal efforts.

By 2025, the trust ecosystem that Amazon’s entire business depended on — the idea that reviews reflect real customer experiences — was visibly eroding. Third-party review analysis tools like Fakespot and ReviewMeta became essential for any serious online shopper, and even they struggled to keep up with increasingly sophisticated AI-generated reviews.

Social Media: The Engagement Farm

Social media platforms became perhaps the most dramatic demonstration of the Dead Internet Theory’s core premise. Facebook, in particular, was overwhelmed by AI-generated content farms.

The playbook was simple: create a page, post AI-generated images designed to trigger emotional responses (patriotic imagery, cute animals, religious content, outrage bait), and harvest engagement. The Facebook algorithm — designed to maximize time-on-platform — couldn’t distinguish between engagement with genuine human content and engagement with AI-generated slop. Worse, the slop often performed better, because it was optimized specifically for algorithmic amplification in ways that genuine human content was not.

Stanford Internet Observatory researchers documented networks of hundreds of coordinated pages posting identical AI-generated content across Facebook, Instagram, and TikTok. Some networks traced back to click farms in Southeast Asia and West Africa, where operators used AI tools to generate and post content at industrial scale. Others appeared to be fully automated — no humans in the loop at all, just bots posting AI content to other bots.

On X (formerly Twitter), the problem was, if anything, worse. Following Elon Musk’s acquisition and subsequent staff reductions in 2022-2023, the platform’s bot detection and removal capabilities were significantly diminished. AI-generated reply bots proliferated, filling the replies to popular posts with engagement-farming content, crypto scams, and AI-generated commentary designed to look like genuine human responses. By 2024, it was common for the majority of replies to viral tweets to come from bot accounts.

TikTok saw the emergence of AI-generated video content — synthetic voices reading AI-written scripts over stock footage or AI-generated images. Entire channels operated without any human involvement beyond initial setup, churning out dozens of videos daily on topics from true crime to historical facts, all produced entirely by AI.

Google Search: The Death Spiral

If one phenomenon exemplified the Dead Internet Theory’s creeping validation, it was the decline of Google search.

For twenty years, Google search was the internet’s front door. You had a question, Google had the answer. But by 2024, a consensus had formed among users, journalists, and even Google’s own engineers that search quality had significantly degraded — and AI-generated content was a primary culprit.

The problem was structural. Google’s search algorithm ranked pages based on signals that AI-generated content could easily manufacture: keyword density, content length, internal linking, domain authority built through volume. Content farms discovered they could generate thousands of AI-written pages targeting specific search queries, rank them through SEO manipulation, and monetize the traffic through advertising. The result was a search ecosystem increasingly populated by AI-generated pages that technically contained the keywords you searched for but didn’t actually help you.

Users responded with a telling behavioral shift: appending “reddit” to their Google searches. The reasoning was simple — Reddit content was (mostly) written by actual humans discussing actual experiences, and it was often more useful than the AI-generated article farms that dominated standard results. Google noticed the trend and began featuring Reddit results more prominently, essentially admitting that its own ranking system was failing to surface human-generated content.

Google rolled out multiple algorithm updates in 2024-2025 targeting AI-generated SEO content, but the arms race dynamic made these partially effective at best. AI content generators evolved to evade detection almost as quickly as Google updated its algorithms. The fundamental problem remained: generating AI content was cheap and fast, while creating genuine human content was expensive and slow. The economics favored slop.

The Bot Traffic Tipping Point

Then came the number that made headlines.

Imperva’s 2025 “Bad Bot Report” — the cybersecurity firm’s annual analysis of internet traffic — found that automated bot traffic had crossed the 50% threshold of all internet activity for the first time in the report’s history. More than half of all internet traffic was now generated by non-human entities.

This single statistic was staggering in its implications. It meant that if you zoomed out and looked at the internet as a whole — every page load, every API call, every interaction — the majority of it was machines talking to machines, bots scraping other bots, and automated systems interacting with other automated systems. The humans were the minority.

The number required context, of course. Much of this bot traffic was benign: search engine crawlers indexing the web, monitoring services checking site uptime, APIs communicating between services. Not all of it was the malicious, deceptive bot activity that the Dead Internet Theory described. But the trend line was unmistakable: bot traffic had been growing year over year for a decade, and the introduction of generative AI had accelerated the trend dramatically.

Imperva’s breakdown was illuminating. “Bad bot” traffic — scrapers, spam bots, credential-stuffing attacks, AI content generators, and other malicious automated traffic — accounted for roughly 33% of all internet traffic in 2024, up from 32% in 2023 and continuing a steady climb. The remaining automated traffic came from “good bots” like search crawlers. But the line between “good” and “bad” bots was increasingly blurry. Was an AI training crawler that scraped entire websites without permission a good bot or a bad one? What about an AI content generator that rewrote human-written articles without attribution?

AI-Generated News: The NewsGuard Findings

The proliferation of entirely AI-generated news websites represented perhaps the most alarming dimension of the dead internet phenomenon. NewsGuard, a media watchdog organization, had identified over 1,000 AI-generated news websites by late 2023. By mid-2025, that number had grown significantly.

These weren’t obvious content farms with garbled text. Many presented themselves as legitimate local news outlets, complete with professional layouts, staff pages featuring AI-generated headshots, and articles that read like competent (if bland) local journalism. They covered real events — drawing from wire services and other legitimate sources — but the articles were written by AI, the “journalists” didn’t exist, and the sites’ primary purpose was advertising revenue.

The implications for democracy were not abstract. Local journalism in the United States had been in crisis for decades, with newspaper closures accelerating throughout the 2010s and 2020s. Into that vacuum stepped AI-generated local news sites that looked real but weren’t — filling Google News feeds and social media shares with content that had no editorial oversight, no fact-checking, no accountability, and no actual human journalists behind it.

Some of these sites were eventually found to be running politically motivated content alongside their AI-generated news articles, blurring the line between automated content generation and deliberate disinformation. The potential for AI-generated “news” to be used for political manipulation — seeding narratives, manufacturing consensus, creating the illusion of broad-based coverage — was obvious and deeply concerning.

The Philosophical Split: Two Dead Internets

By 2025, the Dead Internet Theory had effectively split into two distinct claims that people often conflated:

Version 1: The Conspiracy

The original, conspiratorial version held that the replacement of human internet activity with AI-generated content was a deliberate, coordinated program orchestrated by intelligence agencies — particularly the CIA, NSA, and their counterparts in other nations — working in partnership with major technology companies. This version posited a specific moment of “death” (around 2016-2017) and implied centralized, intentional control.

This version remains unsubstantiated. No whistleblower has come forward. No leaked documents have revealed such a program. While government social media manipulation operations are documented (Russia’s Internet Research Agency, China’s 50 Cent Army, various state-sponsored astroturfing campaigns), these are specific, bounded operations — not the total replacement of organic internet activity that the conspiracy version describes.

Version 2: The Observation

The second version — the one that most people now mean when they reference the Dead Internet Theory — is less a conspiracy theory and more a documented observation: the internet is increasingly dominated by non-human content, and this process is accelerating so rapidly that the internet as a meaningfully human space is dying or already dead.

This version doesn’t require a conspiracy. It just requires capitalism. AI-generated content is cheaper than human-generated content. Bots are cheaper than people. Automated engagement farming generates revenue with minimal overhead. Every economic incentive in the digital ecosystem pushes toward more automation and less human involvement. The “death” of the internet isn’t a plot — it’s a market outcome.

This version, uncomfortably, is increasingly difficult to dispute.

Evidence: What’s Documented

The evidence supporting the observational version of the Dead Internet Theory has grown substantially:

Bot Traffic Data

- Imperva’s 2025 Bad Bot Report found automated traffic exceeded 50% of all internet activity for the first time

- Bad bot traffic specifically (scrapers, spam bots, credential stuffers, AI generators) hit approximately 33% of all traffic

- Bot traffic has increased year-over-year for every year of Imperva’s tracking, with generative AI accelerating the trend from 2023 onward

- Barracuda Networks research found that bots constituted over 60% of traffic to e-commerce sites specifically

Fake Accounts and Synthetic Users

- Facebook/Meta has reported removing billions of fake accounts per quarter — sometimes over 5 billion in a single year

- Twitter/X’s bot problem became a central issue during Elon Musk’s 2022 acquisition bid, with estimates ranging from 5% (Twitter’s claim) to over 20% (independent researchers) of active accounts being bots

- LinkedIn reported significant growth in AI-generated fake profiles used for scams, espionage, and influence operations

- Dating apps reported epidemic levels of AI-powered romance scam bots

- A 2024 study from Indiana University found that between 9% and 15% of active Twitter accounts showed characteristics consistent with bot behavior

AI Content Proliferation

- NewsGuard tracked over 1,000 AI-generated news websites by late 2023, with numbers continuing to grow

- Amazon removed millions of suspected AI-generated book listings and reviews

- Academic publishers retracted hundreds of papers found to contain AI-generated text, including telltale phrases like “As an AI language model”

- AI-generated images flooded social media platforms, with Meta reporting removal of millions of AI-generated engagement-farming posts

- YouTube saw proliferation of AI-generated video channels using synthetic voices and AI imagery

- AI-generated music flooded Spotify and other streaming platforms, with some estimates suggesting thousands of AI tracks uploaded daily

Search Quality Degradation

- A 2024 study by researchers at Leipzig University, Bauhaus University Weimar, and ScaDS.AI confirmed that Google search results had been degraded by AI-generated SEO content

- Google implemented multiple “helpful content” algorithm updates in 2024-2025 specifically targeting AI-generated spam

- Users overwhelmingly shifted to adding “reddit” to searches, a trend Google acknowledged by featuring Reddit results more prominently

- The SEO industry openly discussed “programmatic SEO” — using AI to generate thousands of pages targeting long-tail keywords — as a standard business practice

Documented Government Bot Operations

- Russia’s Internet Research Agency operations were extensively documented in the Mueller investigation

- China’s “50 Cent Army” of government-paid online commenters is well-documented by researchers at Harvard and elsewhere

- Israel’s Act.IL app coordinated mass online engagement on behalf of Israeli government positions

- Iran, Saudi Arabia, and multiple other nations were caught running state-sponsored bot and troll operations on Western social media platforms

- The U.S. military’s CENTCOM was revealed to be running its own social media influence operations targeting foreign audiences

Evidence: What’s Unsubstantiated

For all the documented evidence supporting the observational version, the conspiratorial version of the theory remains unsupported:

- No coordinated replacement program: No whistleblower, leaked document, or investigative report has revealed a coordinated government-corporate program to deliberately replace human internet activity with AI-generated content

- No specific “death date”: The theory’s claim that the internet “died” around 2016-2017 is not supported by data. Traffic analysis shows gradual trends, not a discrete transition. Human internet usage continued to grow through this period and beyond

- Conflation of independent phenomena: The theory treats algorithmic curation, bot activity, AI content generation, platform consolidation, and search degradation as components of a single coordinated conspiracy. In reality, these are largely independent phenomena driven by different actors and incentives

- Billions of real humans are still online: Despite the growth in bot traffic, billions of real people continue to create and consume content daily. Private messaging, video calls, original content creation, and genuine human interaction continue at massive scale

- Nostalgia bias: Some of the theory’s emotional appeal relies on romanticizing an older internet that was also full of spam, scams, and manipulation — just at a smaller scale and in different forms

The “Prove You’re Human” Era

One of the most culturally telling developments of the 2024-2025 period was the proliferation of human verification systems and the growing anxiety they reflected. CAPTCHAs — those irritating “click all the traffic lights” puzzles — had existed since the early 2000s. But their cultural significance shifted dramatically in the age of AI.

When you had to prove you were human to post a comment, create an account, or even load a webpage, the subtext was impossible to ignore: the default assumption had shifted. You were now assumed to be a bot unless proven otherwise. The internet’s operating assumption about its users had fundamentally inverted.

Platforms experimented with various approaches to the problem. X (Twitter) introduced paid verification as a partial bot-filtering mechanism, though critics argued it simply created a two-tier system where bots that paid $8 per month gained legitimacy. Other platforms explored biometric verification, phone number requirements, and behavioral analysis to distinguish human from automated users.

The irony was thick. The internet — built by humans, for humans, as a tool for human communication — now required its human users to continuously prove their humanity. The machines were the default inhabitants. The humans were the ones who needed documentation.

Cultural Impact

The NPC Meme and Digital Solipsism

The Dead Internet Theory reinforced and was reinforced by the “NPC” (non-player character) meme, which emerged from gaming culture to describe people whose behavior seemed scripted or algorithmic. Originally a political insult (used by the right to mock liberals, then by the left to mock conservatives), the NPC concept took on new resonance in the dead internet context. Were those accounts posting identical talking points actually people behaving like NPCs? Or were they literally not people at all?

This question produced a kind of digital solipsism — the growing suspicion that you might be the only real person in any given online interaction. By 2025, this wasn’t just a philosophical thought experiment. It was a reasonable concern. When you argued with someone on Twitter, you genuinely could not be sure whether you were engaging with a human being, an AI chatbot, a state-sponsored troll operating under a fake identity, or a bot programmed to generate engagement through controversy.

The Authenticity Premium

The dead internet phenomenon produced a paradoxical cultural response: authenticity became the most valuable commodity in an economy of fakes. Content that was verifiably, obviously human-created commanded attention precisely because it was becoming rare.

This explained the explosive growth of platforms and formats that were harder for AI to replicate. Live streaming, with its unscripted moments and real-time interaction, gained value partly because it was difficult to fake. Podcasts, especially those with long, unstructured conversations, thrived because AI couldn’t yet convincingly replicate the rhythms of genuine human dialogue over hours. The resurgence of physical media — vinyl records, print books, handwritten letters — was partly an authenticity signal, a way of asserting human origin in a world drowning in synthetic content.

Academic and Policy Response

The theory, in its observational form, has increasingly informed serious academic and policy discussions. Researchers studying computational propaganda, platform governance, and AI safety have engaged with the dead internet framework as a useful lens for understanding the transformation of online spaces.

The European Union’s AI Act, which began enforcement in 2025, included provisions requiring AI-generated content to be labeled as such — a direct regulatory response to the concerns the Dead Internet Theory articulated. Similar legislation was proposed (though not yet enacted) in the United States, United Kingdom, and other jurisdictions.

The broader question the theory raises — at what point does an information ecosystem become too polluted with synthetic content to function as a space for genuine human discourse? — has become a central concern of AI ethics, platform governance, and democratic theory.

The Self-Fulfilling Prophecy Problem

Perhaps the most unsettling dimension of the Dead Internet Theory is its self-fulfilling quality. The more AI-generated content floods the internet, the less incentive real humans have to participate in online spaces. Why write a thoughtful blog post when AI-generated content farms will outrank it in search results? Why leave a genuine product review when it will be buried under thousands of fake ones? Why engage in a social media discussion when half the participants might be bots?

This creates a death spiral. AI content drives out human content, which makes the internet less valuable for humans, which reduces human participation, which makes the remaining internet even more dominated by AI content. The theory doesn’t need a conspiracy to be true. It just needs a feedback loop.

By 2025, there were signs this feedback loop was operating. Small-web advocates documented the continued decline of independent blogs and personal websites. Forum communities that had survived the social media era finally began dying as AI-generated posts degraded discussion quality. The internet’s biodiversity — the variety of human voices and perspectives that once made it extraordinary — was measurably shrinking even as the total volume of content exploded.

The internet wasn’t dead. But something was dying. The question was whether it could be saved — or whether the economic incentives driving the dead internet phenomenon were simply too powerful to resist.

Timeline

- 2016-2017 — Alleged period when the internet “died,” according to the theory; concurrent with documented Russian bot operations targeting Western social media

- 2017 — Early discussions on 4chan’s /x/ board about perceived internet homogenization and artificial discourse

- January 2021 — IlluminatiPirate publishes the foundational Dead Internet Theory post on Agora Road’s Macintosh Cafe forum

- August 2021 — The Atlantic publishes widely shared article examining the theory, bringing it to mainstream attention

- November 2022 — OpenAI releases ChatGPT, making AI text generation accessible to everyone and dramatically accelerating AI content production

- 2023 — NewsGuard identifies 1,000+ AI-generated news websites operating across the web

- 2023 — Imperva reports bad bot traffic at highest recorded levels (32% of all traffic)

- 2023 — Amazon acknowledges massive AI-generated book and review problem; begins large-scale removal efforts

- 2024 — The term “slop” enters mainstream usage to describe AI-generated internet garbage

- 2024 — Google rolls out multiple algorithm updates targeting AI-generated SEO content, acknowledging the problem’s severity

- 2024 — Leipzig University study confirms documented decline in search result quality due to AI content

- 2024 — Indiana University study estimates 9-15% of active Twitter accounts exhibit bot characteristics

- 2024 — Facebook/Meta removes millions of AI-generated engagement-farming posts

- 2025 — Imperva’s annual report finds bot traffic exceeds 50% of all internet activity for the first time in history

- 2025 — EU AI Act enforcement begins, requiring labeling of AI-generated content

- 2025-2026 — AI-generated content proliferation continues accelerating across all major platforms; “dead internet” becomes a mainstream cultural reference point rather than a fringe conspiracy

Sources & Further Reading

- Tiffany, Kaitlyn. “Maybe You Missed It, but the Internet ‘Died’ Five Years Ago.” The Atlantic, August 2021

- IlluminatiPirate. “Dead Internet Theory: Most of the Internet is Fake.” Agora Road’s Macintosh Cafe, January 2021

- Imperva. Bad Bot Report. Annual publication, 2020-2025

- Bickert, Guy. “Community Standards Enforcement Report.” Meta Transparency Center, quarterly

- NewsGuard. “AI-Generated News Websites Tracker.” 2023-2025

- Jaeger, Sebastian, et al. “Is Google Getting Worse? A Longitudinal Investigation of SEO Spam in Search Engines.” Leipzig University, Bauhaus University Weimar, and ScaDS.AI, 2024

- Bradshaw, Samantha and Philip N. Howard. “The Global Disinformation Order.” Oxford Internet Institute, 2019

- Mueller, Robert S. Report on the Investigation into Russian Interference in the 2016 Presidential Election. U.S. Department of Justice, 2019

- Yang, Kai-Cheng, et al. “Prevalence of Low-Credibility Information on Twitter.” Indiana University, 2024

- Barracuda Networks. “Bot Attacks: Top Threats and Trends.” 2024

- European Commission. “AI Act: First Regulation on Artificial Intelligence.” 2024

- Sadowski, Jathan. “The Internet Is Dead.” The Baffler, 2023

- “The Age of AI Slop.” The Verge, 2024

Frequently Asked Questions

What is the Dead Internet Theory?

Is the Dead Internet Theory true?

How much of the internet is bots?

When did the Dead Internet Theory start?

What is AI slop?

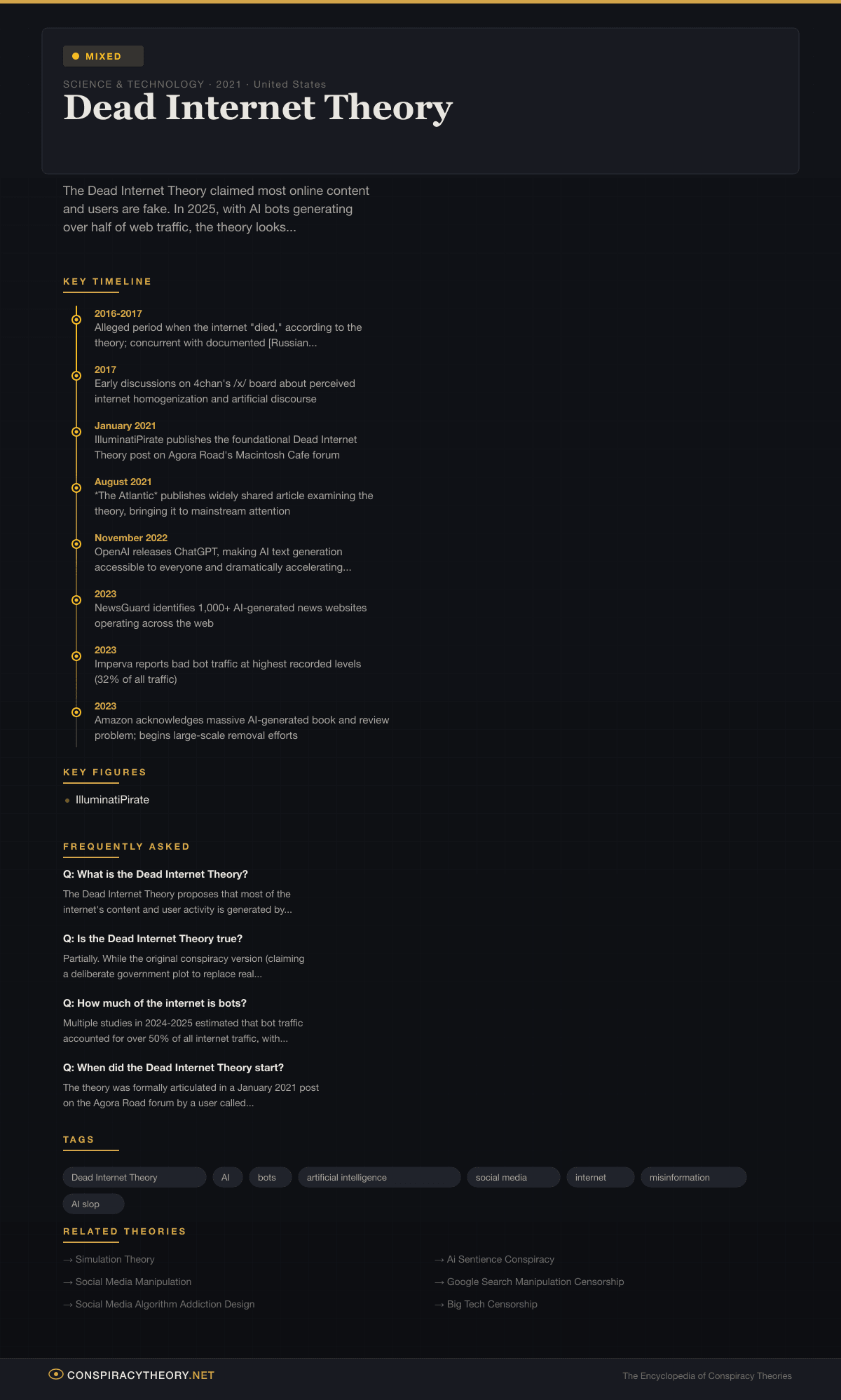

Infographic

Share this visual summary. Right-click to save.